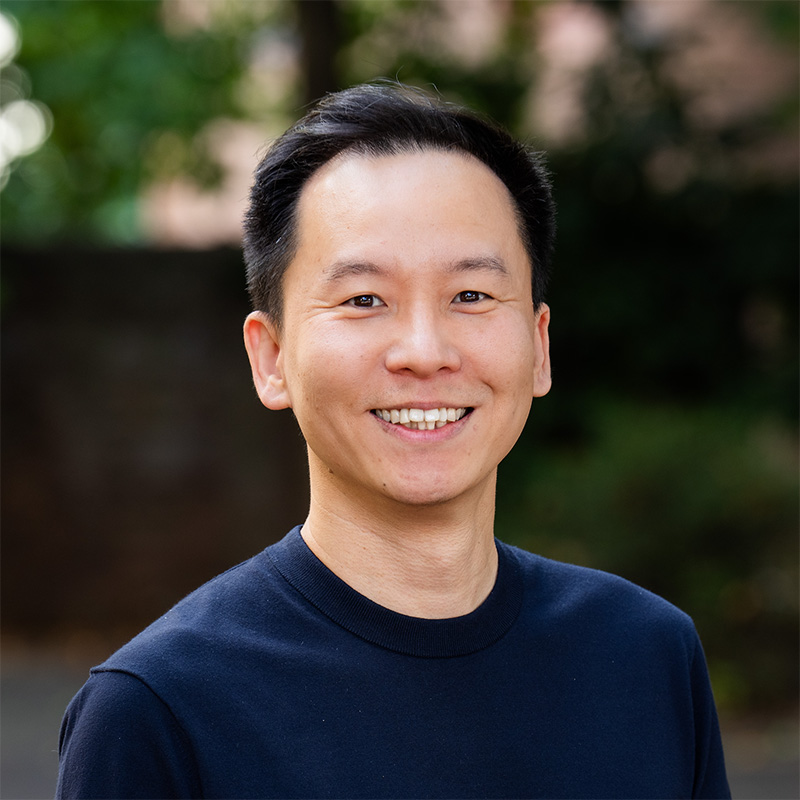

About

I am an Assistant Professor in the Department of Computer Science and Engineering at Ulsan National Institute of Science and Technology (UNIST), where I lead the Ultimate Interface Lab to develop technologies for better user interfaces. I am also a Visiting Assistant Professor in the Department of Computer Sciece at the University of Virginia, which is where I spent several years as an Assistant Professor before joining UNIST.

Research

I am a Human-Computer Interaction researcher, and I have the vision to build the Ultimate Interface - one that is expressive, efficient, and frictionless, and that connects not only a person and a computer but also the physical world.

In my research group, we develop technologies and build interactive systems for various contexts to achieve this vision.

Find more about our research on

Ultimate Interface Lab website.

Ultimate Interface Lab website.

Teaching

UNIST

University of Virginia

Besides Research

🏀 Basketball

I love playing basketball. Fun fact, I've participated in some in-school baskball competitions from high school through grad school, and at UVA as a professor. I didn't win any of them; the closest to winning one was at the UVA ACM students vs. professors event, where our team made it to the final (it was more of a friendly event).

🚲 Cycling

I also love riding bikes of all kinds. I used to do tricks on BMX bikes and mountain bikes. I have completed long-distance bike trips through the east coast of South Korea from Gangneung to Busan and the east coast of Australia from Brisbane to Sydney, both while in college. I'm not as strong as then but still enjoy riding them.